MCP is Powerful yet Dangerous Without a Control Plane for the Physical World

AI agents have evolved from assistants to operators within a matter of months. With the rapid adoption of tool-use protocols like the Model Context Protocol (MCP), agents can now call APIs, trigger workflows, modify system configurations, and increasingly, interact with physical infrastructure. This is not an incremental change; it marks a fundamental shift in how software interacts with the real world.

But there is a problem the industry has not yet directly addressed: we are giving agents the ability to carry out high-consequence actions like deploying infrastructure, modifying network configurations, triggering CI/CD pipelines, signing and distributing software, but without any governance layer between intent and execution. Agents plus tools without a control plane equal systemic risk.

The Control Plane Race Has Started and the Hardware Trust Layer Is Unclaimed

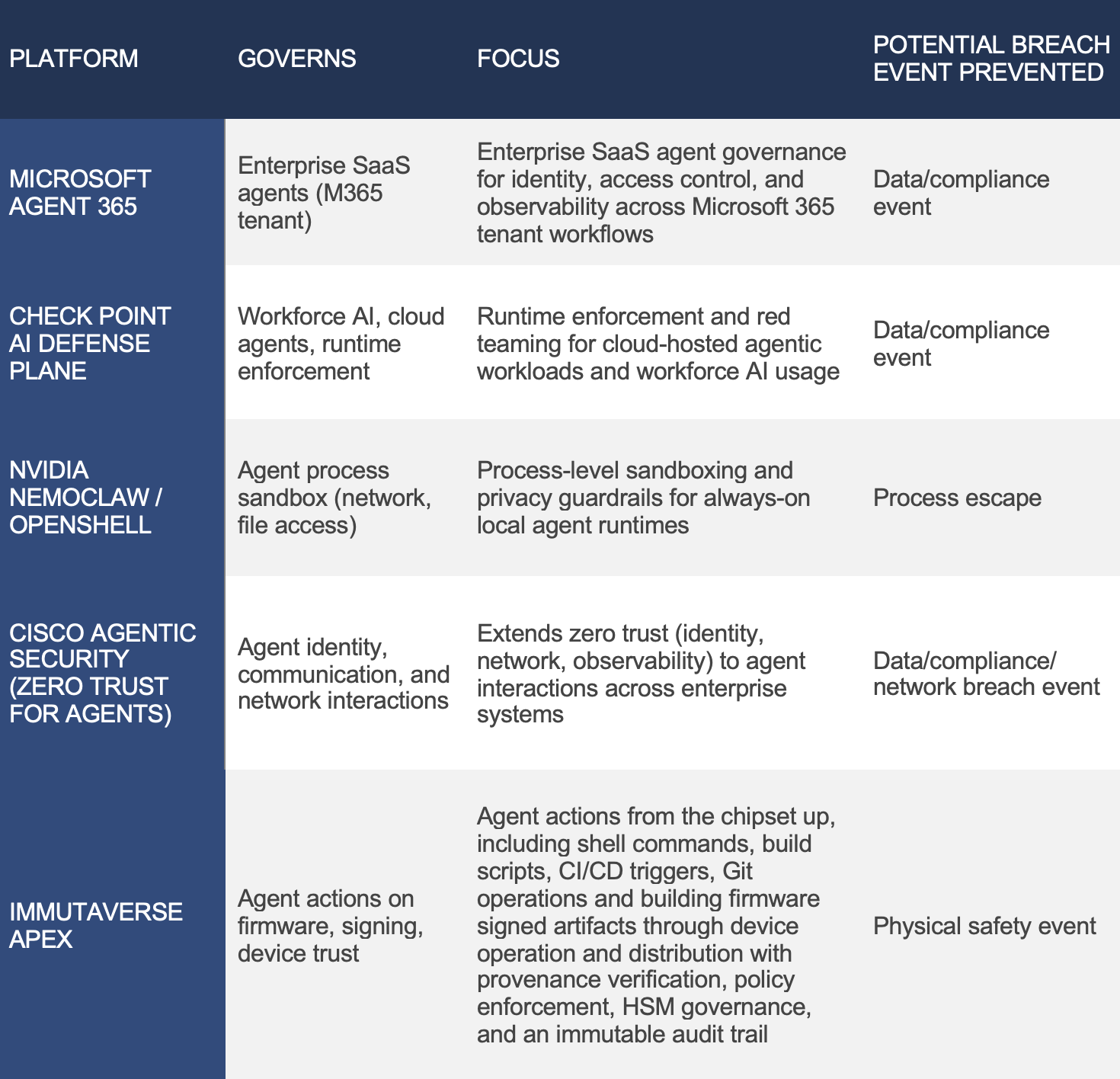

This week at RSAC 2026, the AI agent governance category went from emerging to crowded overnight. Microsoft announced its Agent 365, which will be generally available May 1, describing it as “the control plane for agents,” governing fleet visibility, identity, and access control across enterprise SaaS workflows. Check Point launched its AI Defense Plane the same morning, combining runtime enforcement, red teaming, and agentic governance built on its Lakera and Cyata acquisitions. At GTC 2026 last week, NVIDIA announced NemoClaw, an open-source security and privacy stack layered on top of OpenClaw, with its OpenShell runtime enforcing policy-based sandboxing at the process level for always-on agent workloads.

These are serious products from serious companies. They govern what agents do inside enterprise software stacks: which SaaS tools they can call, which data they can read, which emails they can send.

None of them govern what happens when an agent acts on the hardware trust layer.

Microsoft Agent 365 enforces least-privilege access over MCP servers and user data inside a Microsoft 365 tenant. It has no concept of an HSM, a secure boot chain, or an OTA firmware distribution pipeline. Check Point’s AI Defense Plane covers workforce AI, AI applications, and cloud-hosted agent workflows. Their separate IoT product line addresses device security, but no one at Check Point appears to be asking what happens when an AI agent is the entity triggering the firmware update. NemoClaw’s OpenShell sandboxes the agent process itself with YAML-defined network and file access policies, but it stops at the process boundary. It does not govern what that process hands to a signing key.

NemoClaw secures the agent process. Microsoft and Check Point secure the agent’s access to enterprise software. Immutaverse secures what agents do from the chip up. These are not competing products, they address different consequence classes, and only one matters when a misconfigured agent action reaches a physical device fleet.

The regulatory frameworks governing manufacturers of connected devices—UN R155/WP.29, DO-326A/ED-202A, EU CRA, ETSI EN 303 645, NIST IR 8259, US Federal IoT Cybersecurity Improvement Act, and EO 14144—require firmware integrity as a first-order compliance obligation. None of the enterprise agent governance platforms are building for those compliance regimes.

The Illusion of Safety

MCP and similar frameworks are often described as “a standard way for AI to call tools.” That’s accurate, but it’s incomplete in a way that matters.

MCP defines how tools are described, how they are invoked, and how data flows between agent and system. However, it does not determine whether the action is safe, if the agent should be allowed to perform it, whether the data being used is trustworthy, or whether the outcome is auditable or compliant. MCP assumes the tool is safe to call, but it does not make it safe.

This distinction matters because the industry has quietly crossed a threshold. We are no longer asking “What does this data mean?” We are asking “Go do something about it.” And the “something” increasingly includes operations that, if executed incorrectly or maliciously, have consequences that extend beyond the software layer.

Code Signing Is a Trust Anchor and an Attack Surface

Among all actions an agent can now perform, code signing deserves particular scrutiny. Code signing serves as the ultimate trust anchor in the software supply chain. A valid signature tells every downstream systems (installers, update mechanisms, secure boot chains, and end users) that this artifact is authentic and approved. If an agent can perform a signing operation without policy enforcement, provenance verification, or human-in-the-loop approval, the consequences are real. They are the same consequences the industry has already seen with SolarWinds, Codecov, and 3CX, except that the attack vector now includes prompt injection.

What We Know and What We Don’t

There is not yet a widely publicized incident where an AI agent triggered malicious software in production. But the attack surface already exists, and the building blocks are well-documented:

Prompt injection is a recognized vulnerability category (OWASP Top 10 for LLM Applications). Untrusted input like support tickets, log files, README content, API responses can manipulate agent behavior. Johann Rehberger’s “Month of AI Bugs” in August 2025 documented this across every major coding agent platform. OWASP LLM01:2025 formally classifies indirect prompt injection via untrusted external content as the primary LLM risk category.

Tool-enabled agents can and do carry out unintended actions when manipulated. This has been demonstrated repeatedly in research and in production environments. Aikido Security’s PromptPwnd disclosure (December 2025) provided the first confirmed real-world demonstration: untrusted GitHub issue content reached AI agent prompts in CI/CD workflows, with the agent then executing privileged commands. Google’s own Gemini CLI repository was affected and patched within four days of responsible disclosure; at least five Fortune 500 companies had the same exposure pattern.

The MCP-to-execution path is closed by a named CVE. Lakera’s zero-click RCE via MCP (CVE-2025-59944) showed an attacker-authored payload delivered through a Google Docs file harvesting secrets from a developer IDE with no user interaction required.

CI/CD pipelines and signing systems are already high-value supply chain targets. The attack patterns are established; only the entry point is new.

Agentic workflows are assembling these capabilities into production control planes without a governance layer.

Taken together, these aren’t just theoretical concerns - they are documented capabilities that have already converged in real-world environments.

The Risk is Now Greater Than the Sum of Its Parts

We now have all the building blocks for a new class of supply chain attack. The chain looks like this:

Untrusted Input → Prompt Injection → Agent Manipulation → Tool Invocation → CI/CD Execution → Signed Artifact → Distributed Compromise

No single step in this chain is hypothetical. Each has been demonstrated independently. The convergence of an end-to-end attack that starts with prompt injection and culminates in a signed, distributed compromise is plausible today. Simon Willison named this the “Lethal Trifecta” (June 2025): access to private data, exposure to untrusted content, and the ability to communicate externally. Johann Rehberger formalized the end-to-end pattern as the “AI Kill Chain”. The firmware signing layer is precisely where the Lethal Trifecta terminates with physical-world consequence, and it is the gap that no current agent control plane addresses.

This isn't just a model problem. It's a control plane failure and a hardware trust issue that no enterprise agent governance platform is designed to address.

Let’s make it concrete: imagine a support ticket, a log file, or a README containing malicious instructions buried in natural language. An agent ingests it as context, interprets it as valid guidance, and invokes a firmware signing workflow. The CI/CD pipeline runs exactly as designed. The firmware is signed with a valid key. It ships. Nothing was “hacked.” Every system did what it was told. But the system is compromised—and the compromise carries a valid signature.

Why should we care?

MCP adoption is accelerating across every major agent framework. Enterprises are wiring agents into production CI/CD pipelines, cloud provisioning, and device management systems today. The security models governing these integrations were designed for human-initiated, request-response workflows, not for autonomous, multi-step agent chains that can execute dozens of tool calls in seconds without a human in the loop. The gap between agent capability and agent governance is widening with every deployment.

The enterprise agent governance platforms launched this week will govern most of those deployments. They will not govern those that reach firmware, secure boot chains, or physical devices. That is the deployment class where a misconfigured action is considered a safety event, not just a compliance incident.

The Missing Layer

Today’s agent-to-tool architecture for high-consequence systems looks like this:

AI Agent → MCP → Execution

There is no layer for policy enforcement, risk evaluation, provenance verification, secure execution, or auditability. The agent decides what to do. MCP routes the call. The tool executes. No one asks: should this action be allowed?

The enterprise platforms are now including that question for SaaS workflows, but nobody is asking this question for the hardware trust layer.

Introducing Immutaverse APEX: the agentic policy & execution plane for the physical world

At Immutaverse, we are building the governance layer that sits between agent intent and hardware-world execution, enforcing policy before actions take place. Think of it as what IAM, TLS, and policy engines were to the cloud—but for agents operating on physical systems. We have started building it, beginning with the domain we know best: firmware signing and software supply chain security for IoT, OT, automotive, and critical infrastructure.

The architecture:

Agent → MCP → Immutaverse APEX → CI/CD + Firmware Signing

How it differs from what launched this week

Analysis of these agentic governance offerings reveals a consistent and critical blind spot:

Microsoft Agent 365 enforces least-privilege access by controlling which users, data, and MCP servers agents can use, extending conditional access and traffic filtering from users to agents. This is fundamentally an enterprise SaaS governance model: it treats agents as managed identities inside a Microsoft 365 tenant. It has no concept of firmware, hardware trust anchors, or physical-world execution. Its threat model ends at the cloud application boundary.

Check Point’s AI Defense Plane expands into workforce AI security, AI application protection, and runtime enforcement for autonomous agents. However, its IoT/OT capabilities (e.g., Quantum IoT Protect) remain a separate product line focused on device and firmware runtime protection—not governance of AI agents acting on those systems. The two layers do not converge. No one is asking: what happens when an AI agent is the entity triggering the firmware update?

NVIDIA NemoClaw / OpenShell secures the agent process itself through sandboxing and policy-defined constraints on file system and network access. It governs how the agent behaves at runtime—but stops at the process boundary. It does not govern what that process hands to a signing key or deploys into a device ecosystem.

Cisco’s newly announced approach to securing the “agentic workforce” extends zero-trust principles—identity, network enforcement, observability, and policy—into agent interactions across enterprise systems. It strengthens how agents authenticate, communicate, and operate across infrastructure. But like the others, its control model is anchored in enterprise IT environments. It governs access, communication, and behavior within networks—not the integrity of firmware pipelines, hardware trust chains, or the consequences of agent actions on physical systems.

Across all four, the pattern is clear: these platforms govern who agents are, what they can access, and how they behave in software environments. None govern what happens when an agent’s action crosses into the hardware trust layer—firmware signing, secure boot chains, and physical device fleets.

Immutaverse closes this critical gap.

We believe the hardware trust layer requires:

Provenance verification. Where did this build artifact come from? Can we trace it to a known, trusted source via in-toto, Sigstore, or SLSA attestation?

Policy enforcement. Does this action comply with organizational policy, sector-specific regulatory requirements (NERC CIP, WP.29, IEC 62443), and role-based access controls?

Risk-based controls. What is the blast radius of this action? Should it require additional approval, rate limiting, or human-in-the-loop review?

Secure execution. Is the execution environment attested and hardened? Are signing keys protected by HSMs or equivalent controls?

Auditability. Is every action logged with an immutable, tamper-evident audit trail that meets compliance and forensic requirements?

These functions transform signing from a trust assumption into a verifiable, policy-enforced capability that no enterprise agent control plane currently provides.

Why This Matters Beyond Firmware Security

Firmware signing is our starting point, not our ceiling. The control plane pattern applies wherever agents interact with high-consequence systems:

Infrastructure provisioning that prevents agents from spinning up unauthorized cloud resources or modifying production environments without governance

Network configuration to ensure firewall rules, routing tables, and access policies are not modified without policy enforcement

IoT/OT device control that governs firmware updates, configuration changes, and operational commands to devices that affect the physical world

Critical infrastructure systems in energy grids, water treatment, transportation networks, and other systems where a misconfigured agent action could have cascading physical consequences

In each of these areas, the question remains the same: who governs the agent? The answer today, in most implementations, is “nobody” because the platforms that just launched this week are not designed for these environments.

The Regulatory Tailwind

This is not only a technical problem. Regulatory frameworks across three of Immutaverse's target sectors are converging on the same conclusion, and none of them currently account for AI agents as actors in the supply chain, i.e.

The EU Cyber Resilience Act mandates secure-by-design for connected products, including software update mechanisms. Enforcement timelines are already in motion with 2026 compliance due dates.

UNECE WP.29 / UN R155: requires automotive OEMs to maintain a Cyber Security Management System covering the full vehicle software lifecycle, including firmware update integrity and supply chain security. Applies to all new vehicle type approvals globally.

DO-326A / ED-202A: the airworthiness cybersecurity standard for civil aviation requires demonstrable integrity of airborne software throughout the development and update lifecycle, directly implicating firmware signing and provenance.

IEC 62443: the primary international standard for industrial OT/ICS security, referenced in EU CRA implementation guidance and required by major critical infrastructure operators for vendor qualification. Applies across energy, manufacturing, and industrial IoT supply chains.

NIST SP 800-218 (SSDF) and the Secure Software Development Attestation Form require organizations to demonstrate software supply chain integrity for US federal procurement — increasingly a condition of doing business with defense and civilian agencies.

US Executive Order 14144 (as amended) continues to tighten requirements for SBOMs, provenance, and supply chain transparency across federal software procurement.

The gap between what these frameworks require and what current agent architectures provide is significant. Organizations that build APEX-class governance into their agent workflows now will stay ahead of the compliance curve, while those that don’t will find themselves explaining to regulators and customers why their AI-driven pipelines cannot demonstrate the controls their sector frameworks already require.

Our Next Step: Open Source and Collaboration

We are exploring open-sourcing our control plane MVP. Our belief is that this layer is like TLS, certificate transparency, and SBOM standards that need to be a shared interoperable foundation, not a proprietary black box. We’re designing the architecture to be:

Protocol-agnostic to work with MCP today and extensible to future agent-tool protocols

Policy-engine compatible to integrate with OPA, Cedar, and existing zero-trust policy frameworks

Supply-chain native built on in-toto, Sigstore, and SLSA provenance standards

Audit-first with immutable logging for compliance, forensics, and continuous assurance

The goal is to flesh-out a layer that any organization can adopt, inspect, and build upon because governance infrastructure only works if it is trusted, and trust requires openness. Microsoft and Check Point are building proprietary control planes for their ecosystems. Let’s build an open standard for the hardware trust layer.

We’re Looking for Collaborators

If you are working in any of these areas, we want to hear from you:

MCP implementations and agent tooling frameworks

CI/CD security and pipeline integrity

Firmware signing, secure boot, and device trust

IoT/OT/embedded systems security

Policy engines, zero trust architecture, and identity governance

Software supply chain security (in-toto, Sigstore, SLSA, SBOM tooling)

Critical infrastructure cybersecurity (energy, water, transportation, automotive, aerospace & defense)

Whether you’re building, researching, or deploying in these spaces, we’d like to compare notes, explore integration points, or co-develop the open standard this industry needs.

Final Thought

MCP provides agents with a structured way to interact with the world. That is genuine progress. But a well-formed API call that deploys compromised software to ten thousand vehicles, grid sensors, or medical devices is a catastrophe.

Microsoft, Check Point, and NVIDIA are building the governance layer for agents in the enterprise software stack. That layer is necessary and important. It is not sufficient for the hardware trust layer.

If agents are going to operate in the physical world, we need a layer that ensures their actions on hardware trust systems are safe, enforceable, and verifiable.

If we don’t build it together, we’ll learn the hard way after the first agent-driven supply chain incident, so join us today!